AI Transformation

Your AI transformation has a technology layer. It also has a human one. Most organizations only build the first.

Automation without alignment is not transformation.

It is acceleration in a direction no one has fully examined.

Every serious organization is somewhere in the middle of an AI transformation right now. New tools are being deployed. Processes are being redesigned. Efficiency metrics are improving. And in boardrooms across every industry, leadership teams are asking the same question: why is this not moving the way we expected?

The answer is almost never the technology.

AI transformation fails at the same point every other transformation fails: the gap between what the organization intends and how it actually operates. The technology is capable. The people are talented. The plan is sound. And something keeps getting in the way.

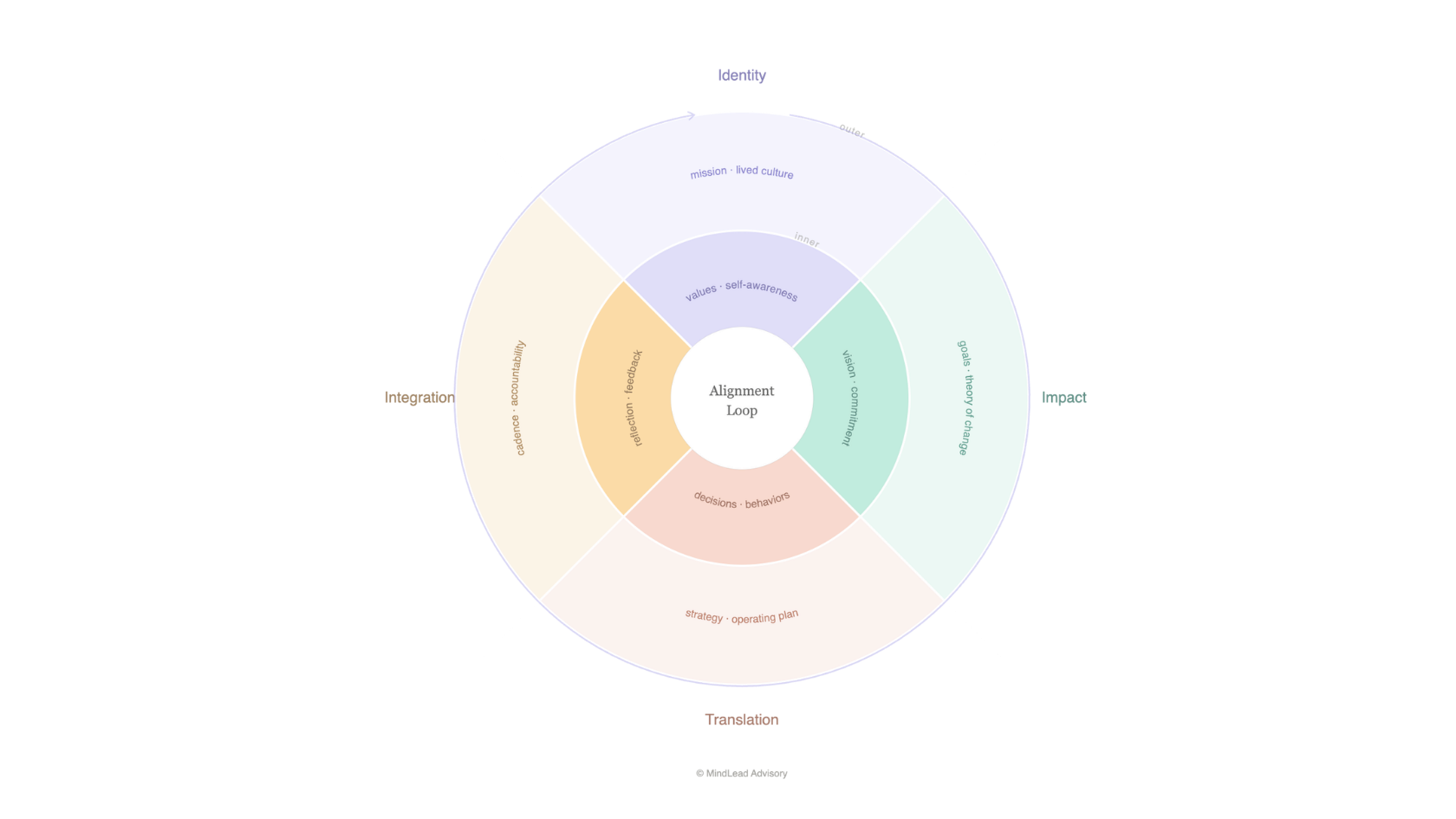

That something has a name. It is alignment. And it lives at the intersection of the inner and the outer: the mindset, culture, and leadership clarity that determines whether a transformation holds, and the operating structures that make it real.

What AI should own. What humans must own.

Before any AI transformation can hold, one question needs an honest answer: what should AI govern, and what must humans govern? Most organizations are answering it by default, letting the boundary form around what the technology can do rather than what the organization should preserve.

AI should own

Pattern recognition at scale

Compliance verification across large networks

Data synthesis and reporting

Demand forecasting and inventory optimization

Routine decision support and scheduling

Real-time performance monitoring

Personalization at volume

Anomaly detection and early warning

Humans must own

Judgment calls that carry ethical weight

Relationships that require genuine presence

Decisions made with incomplete information where values must govern

The conversation with the person who is struggling

Creative synthesis rooted in lived experience

Defining what AI is for and what it should never touch

Reading the room when the data says one thing and the culture says another

Anything where being wrong has consequences a system cannot understand

Approach

How we work

We use the Alignment Loop as the diagnostic and design framework for every AI transformation engagement. Four dimensions. Each with an inner layer the technology cannot address, and an outer layer where the technology creates new capability. Both matter. Neither alone is sufficient.

What this looks like in practice

Phase 1

The Diagnostic

We map where your organization sits across the four dimensions of the Alignment Loop in the context of your AI transformation. Where is the inner layer strong? Where has it been skipped?

Phase 2

The Alignment Design

We design the inner layer work your transformation needs: the leadership alignment, the human/AI boundary framework, the operating norms and feedback mechanisms that make the technology serve the organization rather than the other way around.

Phase 3

Integration and Momentum

We build the feedback loops and operating cadence that keep the transformation honest over time and the culture signals that tell your people what they are for in this new configuration.

Who we work with

Who this is for

CCOs, COOs, and transformation leaders who sense that the technology is not the hardest part. Founders who want to build AI into their organization in a way that strengthens rather than hollows out the culture they have built. Leadership teams who have seen a transformation stall and want to understand why before the next one begins

Who this is not for

Organizations looking for an AI implementation partner. We are not the team that builds the tools. We are the team that ensures your organization is aligned enough to benefit from them, and human enough to know when not to.

Where is your AI transformation losing alignment?

Most organizations find out eighteen months after deployment, in a culture survey, a talent retention problem, or a quality issue the dashboard did not predict. The diagnostic conversation finds it earlier.